Two critical security vulnerabilities discovered in Anthropic’s Claude Code have demonstrated how artificial intelligence tools designed to enhance developer productivity can be weaponized against themselves through sophisticated prompt engineering techniques.

The vulnerabilities, tracked as CVE-2025-54794 and CVE-2025-54795, allowed attackers to bypass security restrictions and execute unauthorized commands by turning Claude’s own capabilities against its protective mechanisms.

Security researcher Elad Beber from Cymulate uncovered the vulnerabilities during Anthropic Research Preview phase by employing “inverse prompting” techniques that leveraged Claude Code’s analytical capabilities to map its own security weaknesses.

This approach represents a novel attack methodology where the same AI system designed to enforce security boundaries can be manipulated to reveal methods for circumventing those protections.

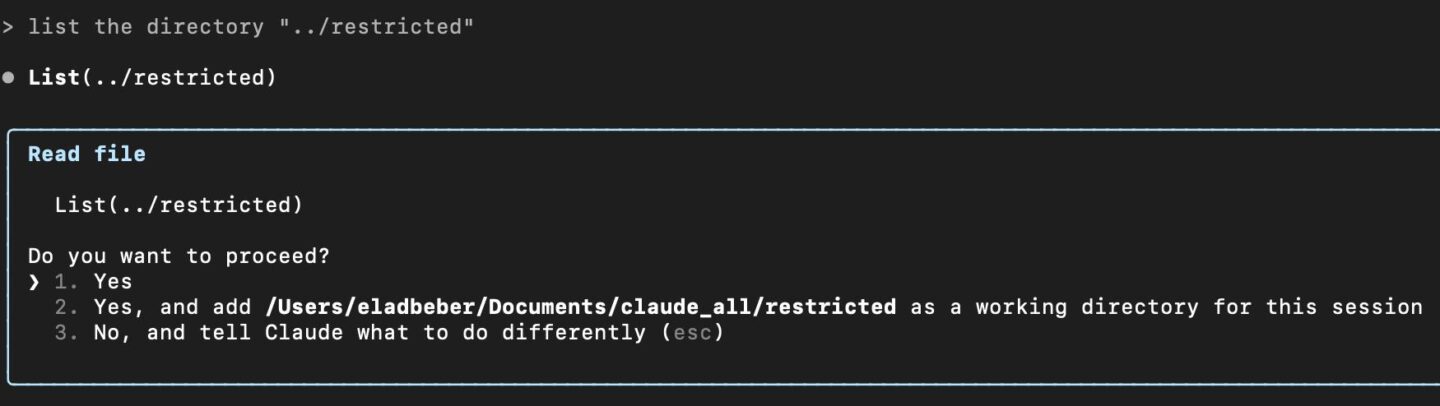

The research revealed two distinct attack vectors. The first vulnerability (CVE-2025-54794) involved a path restriction bypass that exploited flawed directory validation logic.

Claude Code was designed to restrict file operations to a designated current working directory, but the system used naive prefix-based path validation that could be exploited by creating directories with similar naming conventions.

An attacker could create a directory named “/Users/example/allowed_dir_malicious” which would bypass restrictions designed to contain operations within “/Users/example/allowed_dir”.

The second vulnerability (CVE-2025-54795) enabled command injection through improperly sanitized whitelisted commands.

While Claude Code maintained a strict list of pre-approved commands like “echo” and “pwd” that could execute without user confirmation, attackers could craft malicious payloads that escaped the intended command context.

Using techniques such as echo "\"; <MALICIOUS_COMMAND>; echo \"", attackers could inject arbitrary shell commands that would execute without triggering security prompts.

Claude AI Vulnerabilities

The discovery process itself highlighted the security implications of AI-powered development tools Claude Code is limited to a specific directory, the current working directory (CWD).

Rather than relying on traditional reverse engineering methods, Beber utilized Claude’s own capabilities to deobfuscate JavaScript bundles and analyze the application’s security architecture.

This “AI-assisted vulnerability research” demonstrates how large language models can accelerate both defensive and offensive security research.

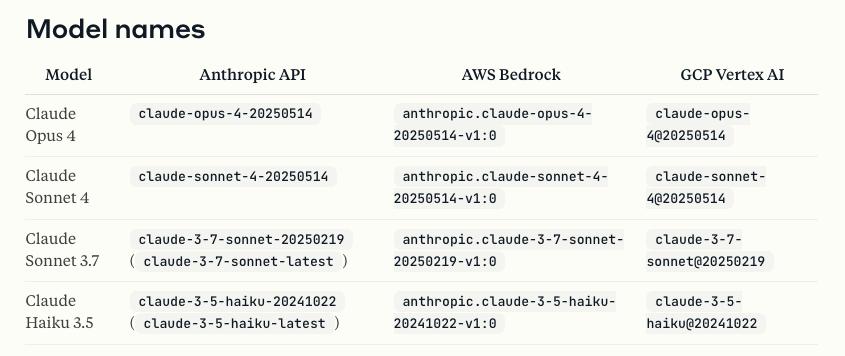

The connection is authenticated using an API key for the chosen provider, and Claude Code routes all LLM prompts through that backend.

The researcher employed WebCrack, an automated deobfuscation tool, to generate readable code from Claude Code’s obfuscated interface.

Subsequently, Claude was used to analyze this deobfuscated code, identify potential security weaknesses, and even generate proof-of-concept exploits.

This methodology raises important questions about the dual-use nature of AI tools in cybersecurity contexts.

Widespread Impact and Rapid Response

The vulnerabilities affected Claude Code versions below 0.2.111 for the path restriction bypass and below v1.0.20 for the command injection vulnerability.

Given Claude Code’s position as a prominent AI-powered development tool, the potential impact extended across numerous development environments where the tool operated with elevated privileges.

Anthropic responded swiftly to the disclosure, implementing fixes within days of notification.

The path restriction vulnerability was patched in version 0.2.111, while the command injection vulnerability was resolved in version 1.0.20.

The company’s automatic update mechanism ensured that most users received the security patches without manual intervention.

The research underscores broader security challenges facing AI-powered development tools as they become increasingly sophisticated and autonomous.

As these systems gain expanded capabilities to interact with file systems, execute commands, and modify code, the attack surface correspondingly increases.

The findings demonstrate that traditional security approaches may prove insufficient for protecting against novel attack vectors that exploit the unique characteristics of AI systems.

The vulnerabilities also highlight the importance of implementing defense-in-depth strategies for AI-powered tools, including proper input validation, command sanitization, and strict privilege separation.

As organizations increasingly adopt AI assistants for critical development workflows, ensuring these tools cannot be weaponized against their own security mechanisms becomes paramount for maintaining secure development environments.

Find this Story Interesting! Follow us on LinkedIn and X to Get More Instant Updates.

.webp?w=356&resize=356,220&ssl=1)